In the last two parts of this series, we discussed our network protocol and the architecture of our body camera system. We shall discuss our backend recording and streamers service in this final part. After that, we shall present the budget we burnt for this MVP, and finally, we shall discuss why it did not work.

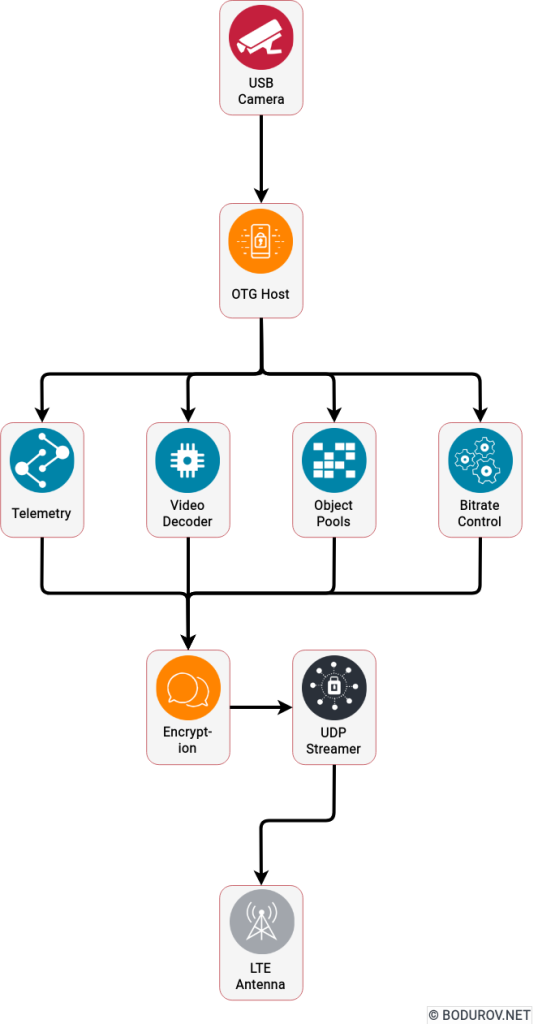

There are multiple server video streaming solutions across the market. Unfortunately, most of them require installing new desktop software or plugins. At the same time, we saw that no video storage format is codec agnostic and could support multiple frames using different codecs. All these weaknesses forced us to develop our storage format for our videos. After a reasonable amount of time of thinking about what would be the format we need for this kind of work, we formulated the following requirements:

- Blazing fast append: We wanted to write down the incoming frames as fast as possible. Every slowdown of reindexing the frames would decrease performance and increase the cost.

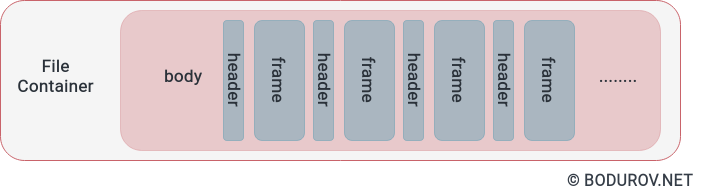

- File-based: Storing a significant amount of data into a database is the wrong approach for media-based files. So the file format had to be binary based. Because of the previous requirement, we had to skip the index. Every index recalculation would end up in a lousy performance.

- To support telemetry: We did not stream only video and audio data, but we also streamed telemetry. There had to be a way to stream its frames as well. Plus, there were use cases in which the user of the body camera could want to stream only telemetry, but not video.

- Websocket streaming: Since we can not support another streaming format, we decided that streaming from our server to the web browser clients in the headquarters will be based on WebSockets. To do this properly, we had to implement our video player.

Fortunately, if we analyze our network protocol, we can see the following characteristics which will fulfill the requirements:

- Partitioning: Every network packet has a unique user id and date. And that is enough to determine the filename of the stream uniquely.

- Counter: Every network packet has a unique counter value, and this value is attached to the date. At the beginning of every day, we moved the counter to zero. If we analyze further the logic of this counter, it could be used as an index key, by which we can sort by time the whole stream.

- Supports telemetry: Our network protocol supports telemetry by default.

- Supports WebSockets: We can reuse the same binary message we received from the Android device for WebSocket streaming. The message must be just encoded properly for WebSocket streaming.

With the information from the previous bullets, we can define the following logic. We append every incoming packet to its corresponding file on the filesystem, similarly to what pcap is doing with the network packets. At the same time, another process is reading the file and building the index in memory of our service. And the service uses this index to restream the recorded network packets through web sockets to the web browser player.

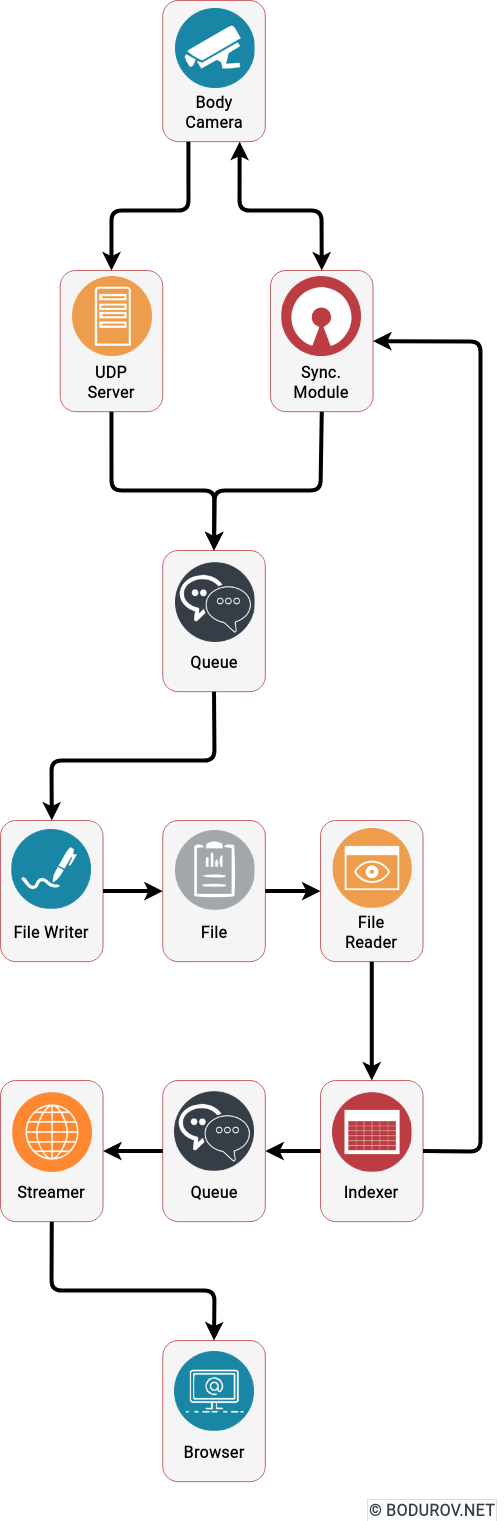

To implement the described logic, we decided to build the following system modules:

- UDP server listener module: The idea of this module is to listen for UDP packets and reroute them to a concurrent queue. The FileWriter module later consumes this concurrent queue.

- Concurrent queue module: Having in mind that we can have multiple process workers, instead of using mutexes or any other synchronization mechanisms, we decided to communicate using queues between the processes.

- FileReader module: This module’s primary duty is to read the file packet by packet, using the already loaded index.

- FileWriter module: The idea of this module is to take the packets from the queue and store them into the file. Partitioning per file per queue was implemented, and every file had a FileWriter process.

- Indexer module: It reads the file and indexes the network packets into the memory. After that, it is used by the Streamer module to stream data.

- Streamer module: This was a collection of processes that started by given offset and used the indexer module to send data to the WebSocket server.

- Web browser player module: The module was used to decode the network packets coming from the WebSocket server and play video and telemetry data in the browser.

- Synchronization module: The idea of this module was to provide a way for the synchronization of missing packets between the Android device and the streaming server. We used the index module to return a given user and date for which frames are missing.

One can easily modify the proposed architecture to support cloud deployment and high scalability by replacing the concurrent queues with message brokers and the local filesystem with GlusterFS.

After we finished the technical details of the implementation, let’s discuss how much it cost for us to implement the MVP:

Budget:

- Android device (200$): We decided to use a standard Redmi 3 device. It supported all the needed features, and it had an excellent battery.

- Extended battery development (3000$): We developed two battery versions because the first one was not good enough, and our hardware vendor did not provide a quality job. We had to switch vendors, etc.

- USB Camera (200$): Fortunately, we used an already produced board, so the price was relatively low. Still, we had to buy multiple cameras until we found the most suitable one.

- 3d printing (400$): 3d printing of multiple prototypes is expensive. And we had to try with various variations.

- Camera mounts (30$): The camera mounts were already manufactured.

- Software development (23000$): One developer spent a whole year working part-time on this project. He implemented the backend server and the mobile application for the Android device.

- Hardware development (8000$): Our hardware guy spent a couple of months developing proper housing and an alternative battery unit for our Android device.

- Business development (1500$): Fortunately, we did not spend a lot of money on business development.

So we managed to implement the technical MVP for a total cost of 36330$. We tried to sell it, and we failed brutally.

Why we failed

As a team without experience in developing hardware products, we made many errors. Fortunately, it was our first and last try at selling a hardware product. We took our lessons, which I shall list:

- No business need: For every successful product, you need a local business need, with which you can test your idea and see whether you will have traction. Burgas is an almost crime-free city, so no need for such a system.

- No hardware ecosystem: There is no ecosystem of electronic hardware manufacturers in Burgas. So you become dependent on people you do not know and even have never met.

- No delivery pipelines: Making hardware depends on your components delivery pipelines. We did not have any established channels and no partners with such.

- No investor: Hardware startups are not for bootstrapping. You need a good amount of money to hire the proper people and to make sure once you have MVP, you can buy a good amount of items. Hardware items supply can end, and after that, you have to redesign your solution.

- Wrong paradigm: Hardware products do not scale so much as digital ones. It will help if you have a good global distribution network and marketing to do it successfully. Being in the 4th city by size in Bulgaria, with 200,000 people, did not help.

In conclusion, despite the problems, we managed to produce MVP, which is a piece of fantastic news. Unfortunately, we could never sell this MVP to anyone for the listed reasons. Looking at the good side of things, we learned what mistakes to avoid when penetrating a market. It helped us with our following products.